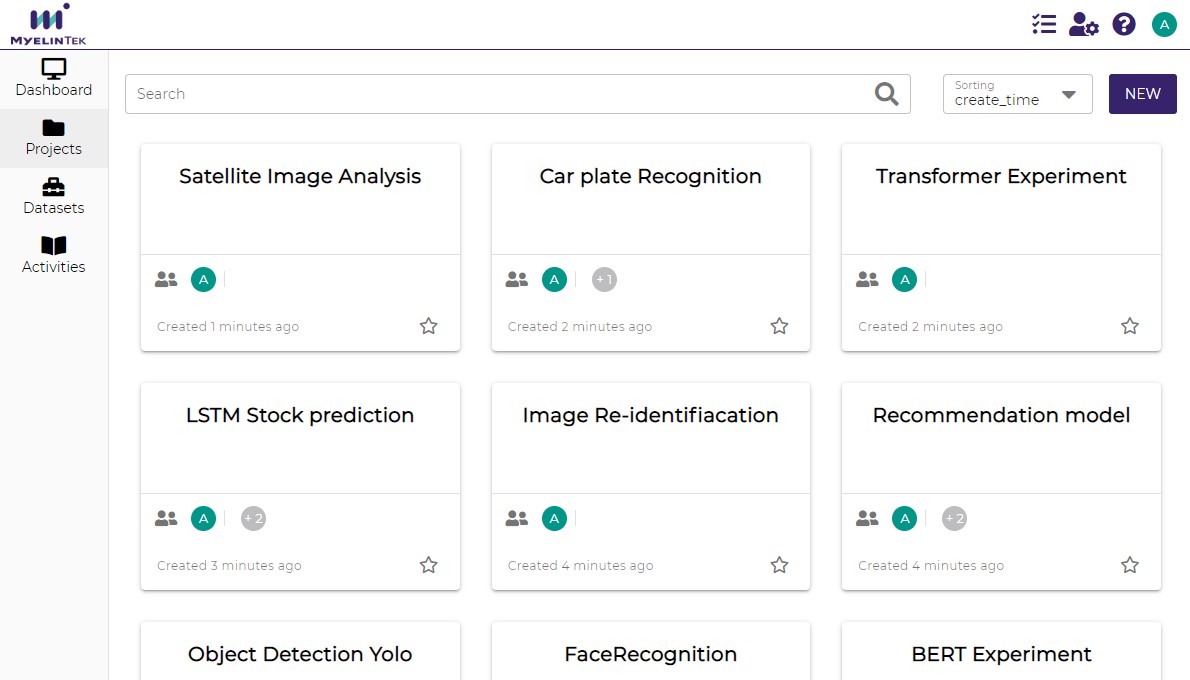

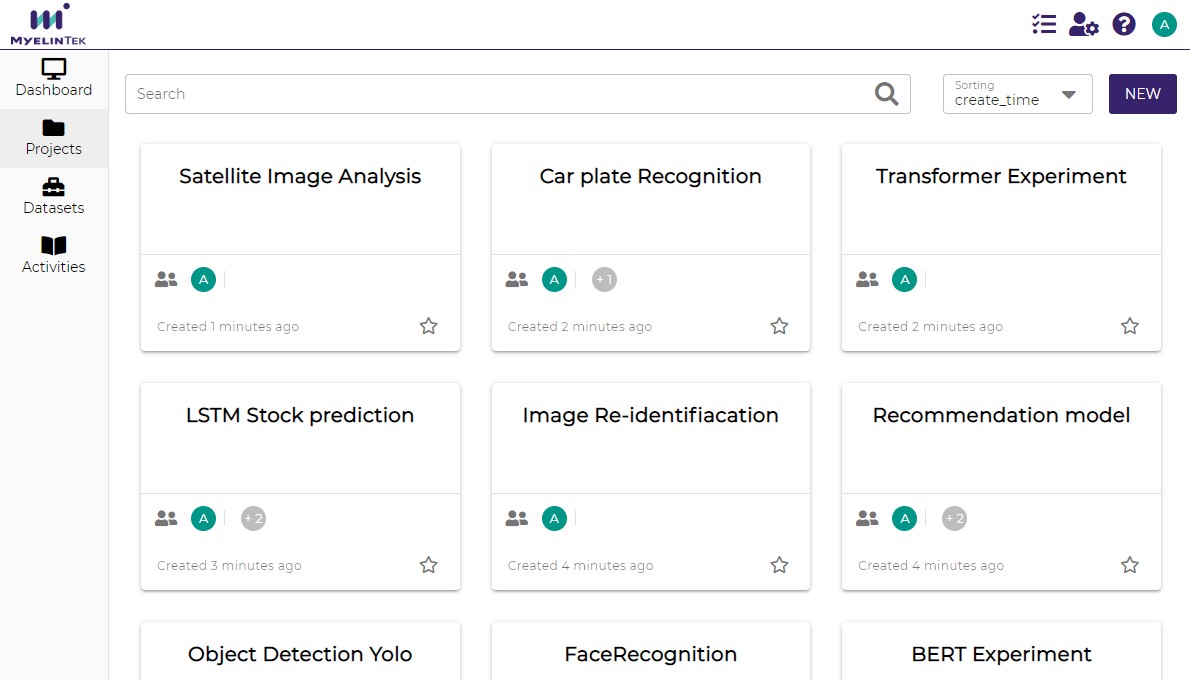

MLSteam AI Platform - the DNN life cycle system, MLOps

MLSteam AI Platform provides the complete software stack required for deep learning models development and models serving. With a user-friendly web interface, users can easily manage datasets for training models, schedule training jobs, manage multiple servers, view the hardware resource usage...etc.

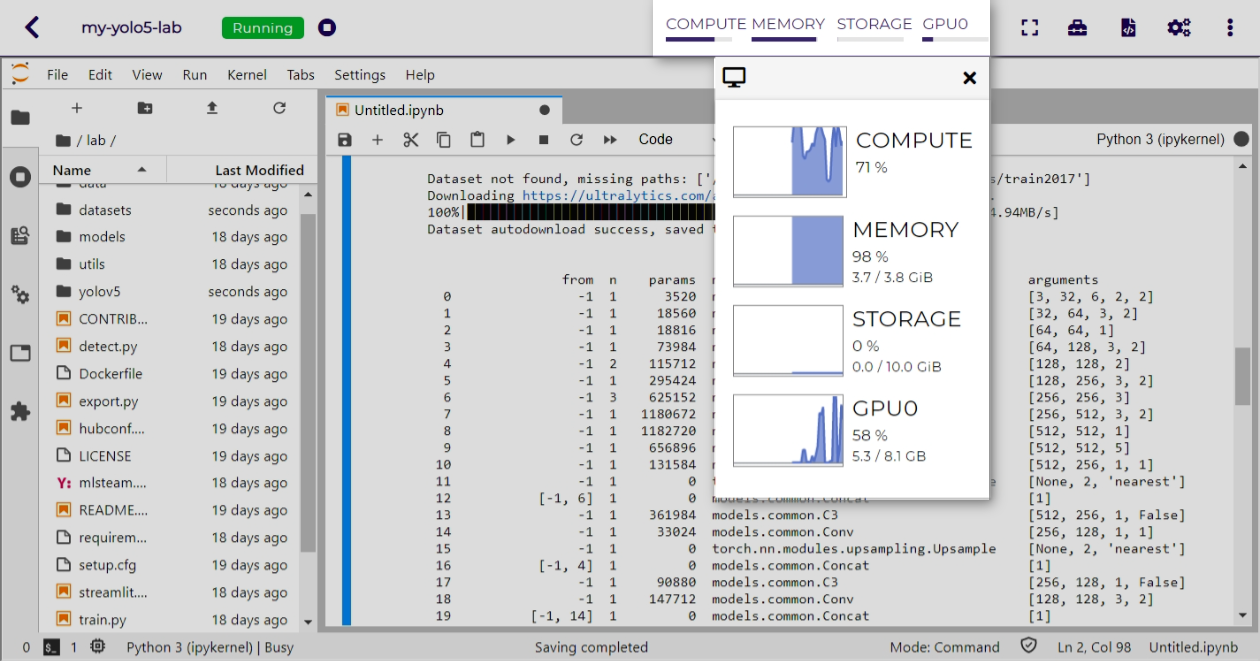

Cloud IDEUsers can easily create a Cloud IDE (based on Jupyterlab) for DNN model development or data preprocessing by attaching their dataset. The Cloud IDE also provides utilities, such as hyperparameter passing, 3rd-party IDE integration (VSCode and PyCharm), tensorboard and GPU monitoring to simplify the training process. |

|

|

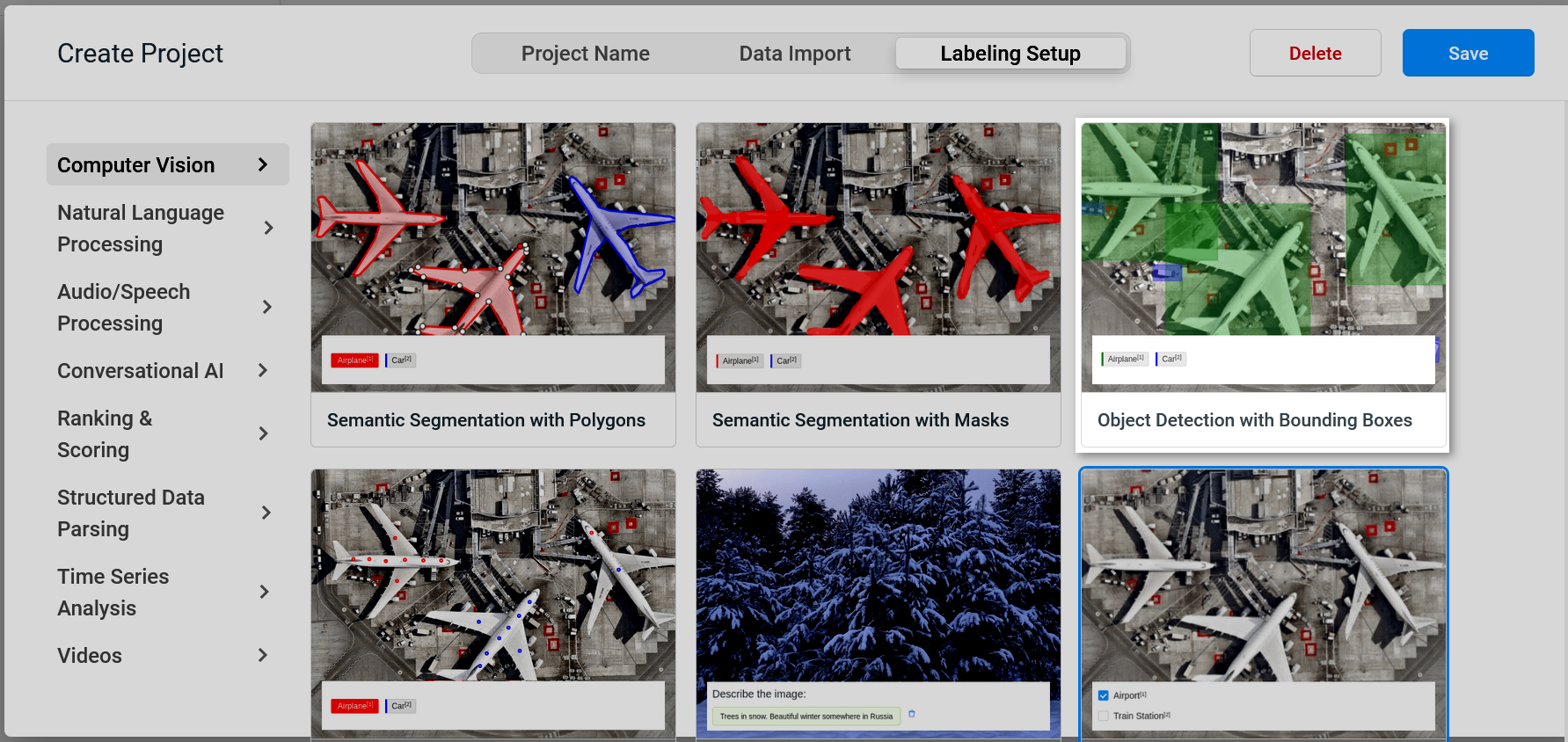

Data labelingWell integrated labeling tools, support mutiple fomat of annotation. Such as computer vision, natural language processing, audio/speech recognition and time seris analysis. Labeled data stay in same system without transfer in/out. |

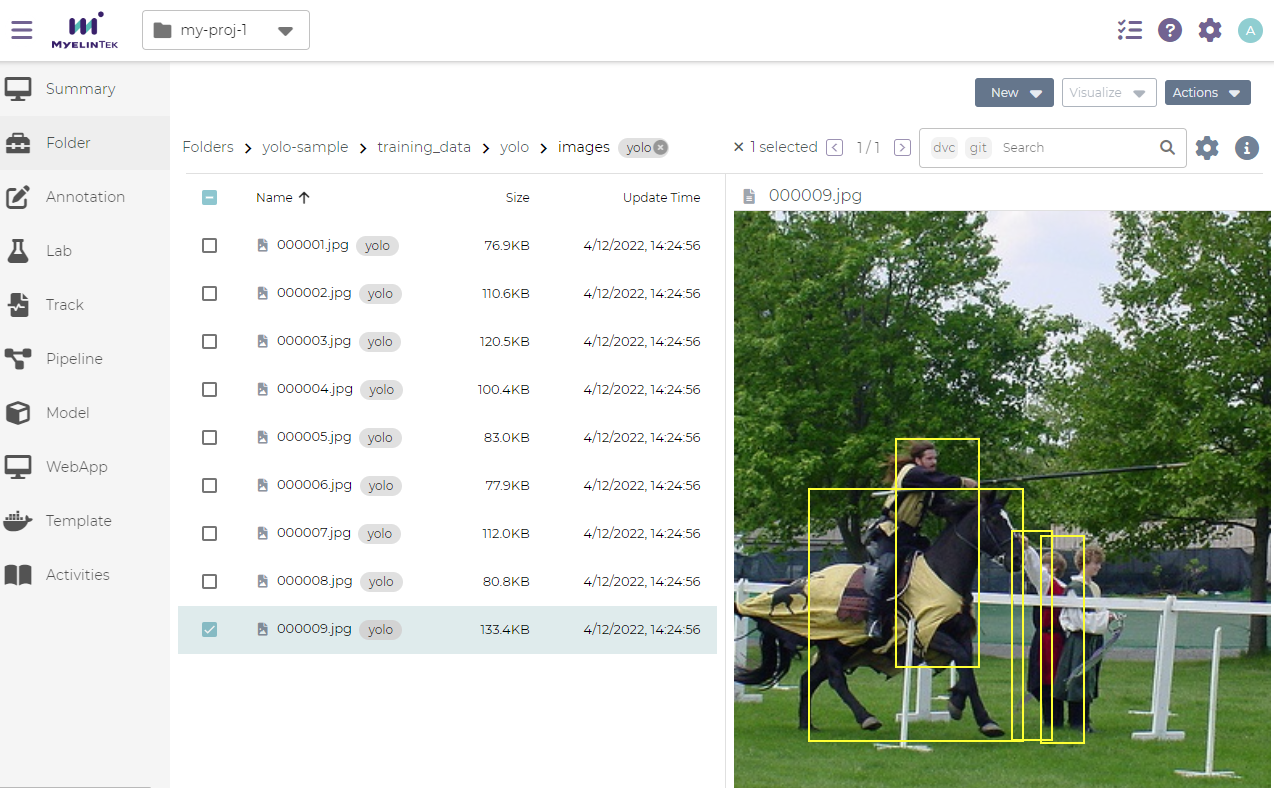

Intuitive datset managementMLSteam provides a file browser style management interface. The user can preview image files, delete files, and download files by selecting target files. To upload files, simply drag and drop files from your PC to the dataset. MLSteam supports multiple dataset annotated formats so that the user can preview the annotated dataset on the dataset page. Platform can connect user's file server via NFS/CIFS, user can use exists data to create model.

|

|

|

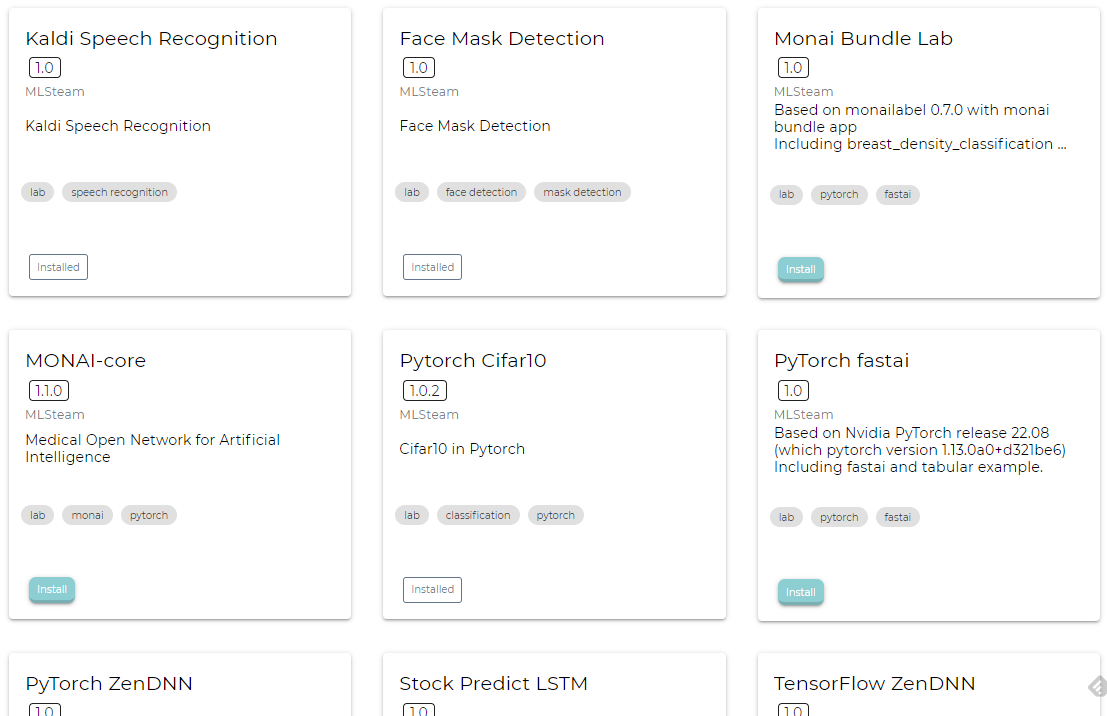

Reuse and ReproduceMLSteam has built-in templates for common ML tasks, such as:

User also can create template to share exist work to other teammate in same project. Support NVIDIA NIM as MLSteam template to surve OpenAI compatible inference API. |

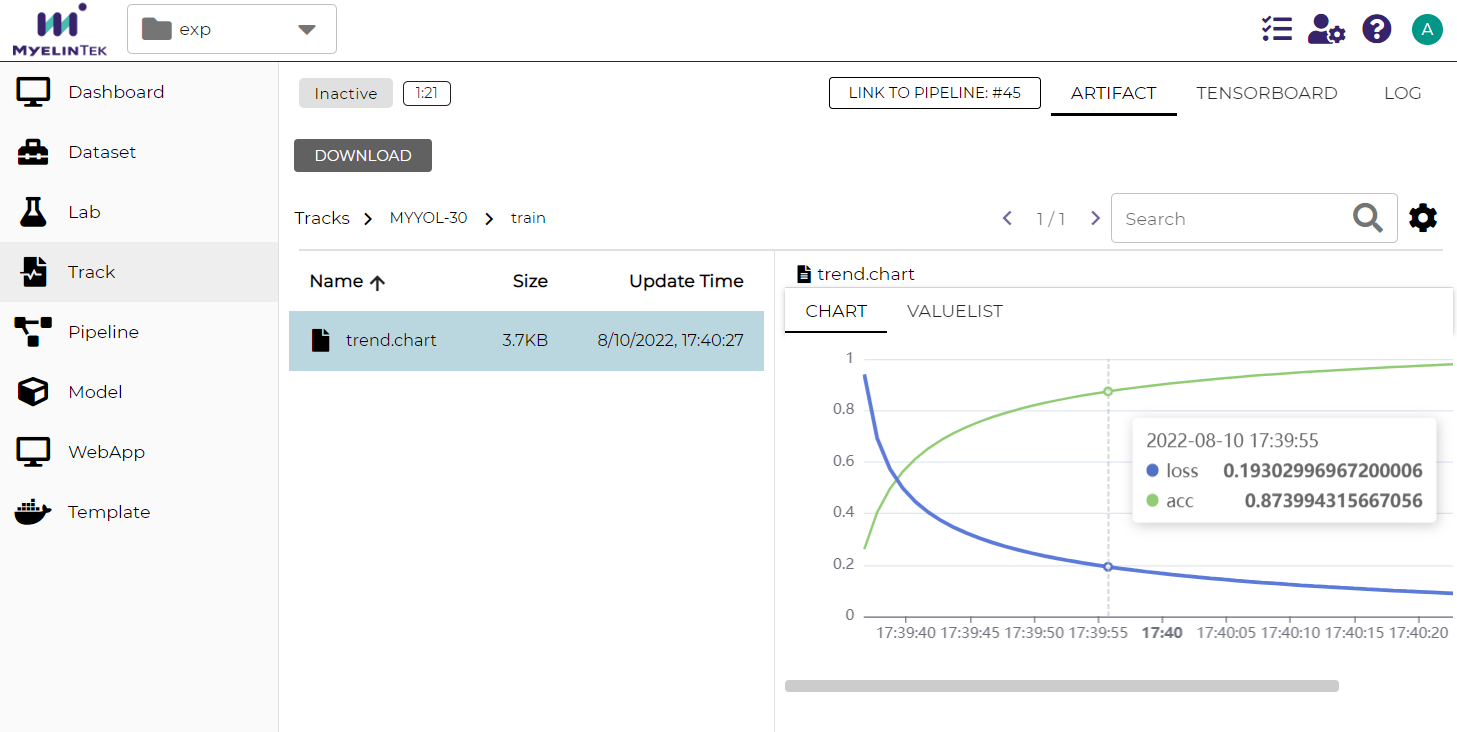

Training Experiement TrackingA track keeps various results of ML training or experiments, including the parameters, metrics, console logs, and any logged files or data. It also enables visualization of the results with TensorBoard.

|

|

Continuous TrainingYou may define a pipeline for a subset of common ML tasks. You may even define an end-to-end pipeline to fulfill MLOps that retrains and evaluates the model for new model designs or dataset and finally deploys the ML application to an experimental or production site.

|

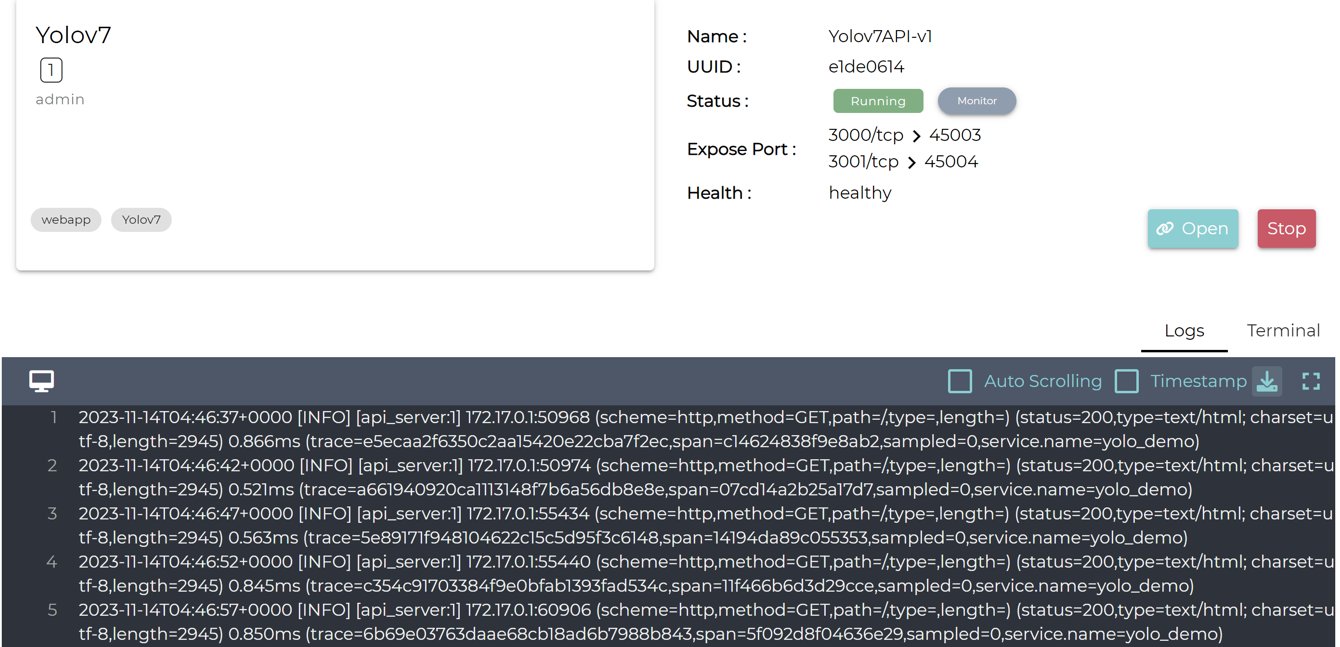

Deploy model as web serviceMLSteam WebApp feature enables deployment of a Web-based ML applications in a simple way. Services for project users may also be provided as a WebApp. User can attach versioned AI model from MLSteam model repository. |

|

|

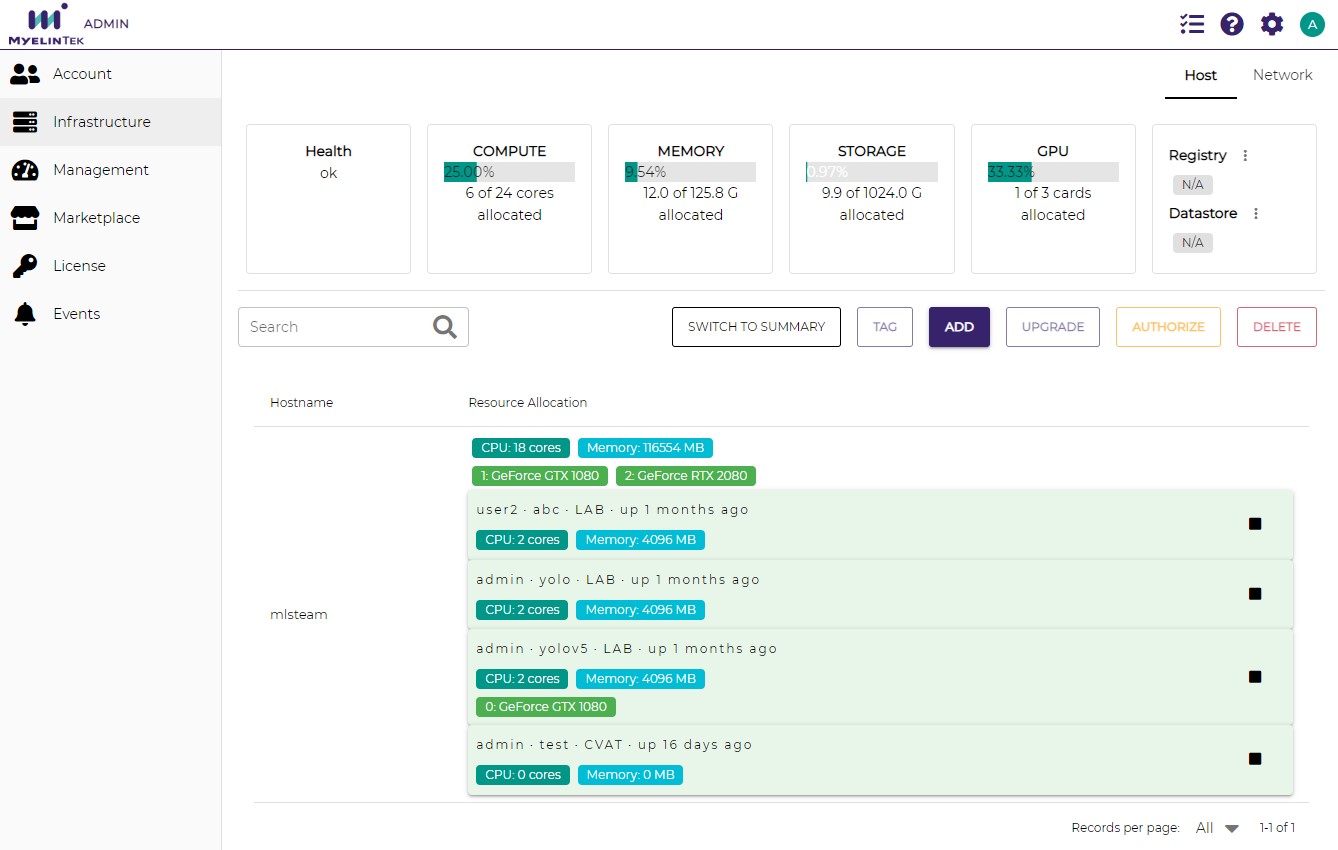

Support CUDA and ROCmMLSteam based on CUDA and ROCm with NVIDIA and AMD GPU environment. Schedule different workload to related GPU environment. MLSteam aims to support the latest hardware and software stack. Including the latest A100 GPU from Nvidia. MIG(multi-Instance GPU) can be easily enabled on the platform. MLSteam also supports the latest MI200 GPU from AMD, with zero configuration, easy and fast setup for DNN developers.

|